Green IT Strategy: How to Reduce Data Center Energy Costs 40% While Improving Performance

A green IT strategy is a framework for reducing energy consumption, minimizing operational costs, and maximizing system performance within a data center. For a CMO or a marketing executive dealing with a burgeoning digital infrastructure, wasted energy translates into a direct cost savings. According to research done by McKinsey’s data center economy research, cooling systems account for 40% of total data center energy consumption. Most enterprise facilities operate at a fraction of their efficiency potential. “The potential savings from a green IT strategy are not small; they are structural.”

1. What Is a Green IT Strategy for Data Centers and Why Does It Matter for Your Budget?

Green IT strategy leverages improvements in energy efficiency, better cooling, server consolidation, and green energy to reduce the cost of operating data center infrastructure without compromising performance.

Data centers are one of the biggest and growing cost components in the IT landscape. Unless we have a deliberate strategy to improve efficiency, the cost will rise in direct proportion to the volume of data or AI workloads.

Key reasons this matters right now:

- The World Economic Forum indicates that data center electricity consumption is likely to double by the year 2026

- Most enterprises operate their data centers at a Power Usage Effectiveness of more than 1.55, implying that more than half of the energy is overhead and not used for computing

- Google’s data center efficiency reports indicate that their fleet operates at a PUE of 1.09, translating to a potential savings of up to 84 percent in overhead costs for each unit of computing work compared to the industry average

With a green IT strategy, this gap is closed. This implies that there is a reduction in costs, a reduced carbon footprint, and a scalable infrastructure without a proportionate increase in cost.

2. How Does Cooling Optimisation Reduce Data Center Energy Costs?

Cooling is the biggest efficiency gain for any data center. Improving how it is handled can save substantial amounts of energy required for cooling without touching any server.

The biggest cause for energy waste in data centers is that cold and hot air mix together before being delivered to the servers. Separating them is the fastest solution.

- Cooling strategies that have been proven to save energy:

- Hot and cold aisle containment – physically separating cold and hot air flows can save up to 40% on required cooling capacity, as per Data Center Knowledge’s 2025 sustainability strategy guide

- Liquid cooling: McKinsey’s liquid cooling analysis confirms that liquid cooling systems reduce energy consumption by over 27% compared to air cooling, while also improving PUE

- Free air cooling: Using external air for cooling when external temperatures are suitable means that mechanical chillers do not need to be used

- Raising server inlet temperature: A temperature increase of 1 degree Fahrenheit in server inlet temperature saves between 4% and 5% in cooling energy cost

- Vertiv’s analysis of liquid cooling implementation found a 15.5% improvement in energy usage effectiveness after introducing liquid cooling into an air-cooled data center.

3. How Does Server Virtualisation Cut Energy Use in a Green IT Strategy?

Server virtualisation enables the consolidation of multiple workloads onto fewer physical servers, reducing the power consumed by hardware running at low levels of utilisation while consuming near full power.

In most enterprise data centres, servers are running at only 10-15% of their overall capacity while consuming power near their maximum rating. This is power being wasted running hardware that is effectively idle.

What server virtualisation actually achieves:

- Major organisations have been able to increase server utilisation levels from less than 15% to 50% through server consolidation

- Containerisation with tools such as Kubernetes has been shown to provide higher density levels with lower resource requirements than a traditional virtualisation platform

- Workload scheduling can be used to run more efficient workloads and automatically power down less efficient hardware

The US Department of Energy’s Best Practices Guide for Energy-Efficient Data Center Design confirms that virtualisation dramatically reduces server count, cooling requirements, and total facility power draw as part of a single efficiency program.

4. What Role Does Renewable Energy Play in a Green IT Strategy?

In switching to renewable energy, carbon emissions and long-term cost volatility of energy prices will be reduced since renewable contracts offer a price stability that fossil fuels can never offer.

Renewable energy is no longer simply a sustainability decision. It is a cost management decision.

How leading organisations are putting it into practice:

- The World Economic Forum states that “Power Purchase Agreements offer long-term energy pricing contracts, protecting against fossil fuel price fluctuations”

- Google uses DeepMind AI to optimise data center cooling, resulting in a potential savings of up to 40% in cooling costs, as reported by Google’s data center efficiency reporting

AWS has made a commitment to 100% renewable energy, effectively removing carbon emissions from their cost model altogether

5. How Do DCIM Tools Support a Green IT Strategy?

The Data Center Infrastructure Management (DCIM) software also offers real-time visibility into energy usage, cooling capacity, and server utilization. This enables operators to detect problems early and correct them before they worsen.

“You can’t improve what you can’t measure.” DCIM solutions bridge this gap between actual and desired performance of your infrastructure.

What DCIM solutions actually deliver:

- DCIM solutions can deliver 10 to 15 percent efficiencies by better coordinating IT and facility infrastructures in real-time, according to Data Center Dynamics’ analysis of power efficiency in AI data centers

- Continuous monitoring of PUE can detect optimization opportunities in real-time rather than waiting for regular audits

- Thermal imaging and computational fluid dynamics modeling deliver precise visibility into cooling performance down to the rack level

- Automated control of cooling, lighting, and power systems based on real-time demand data can eliminate human reaction time

Research by McKinsey’s data center infrastructure research found that a 10 percent reduction in PUE is achievable using liquid cooling with intelligent control that adjusts cooling based on workload demand.

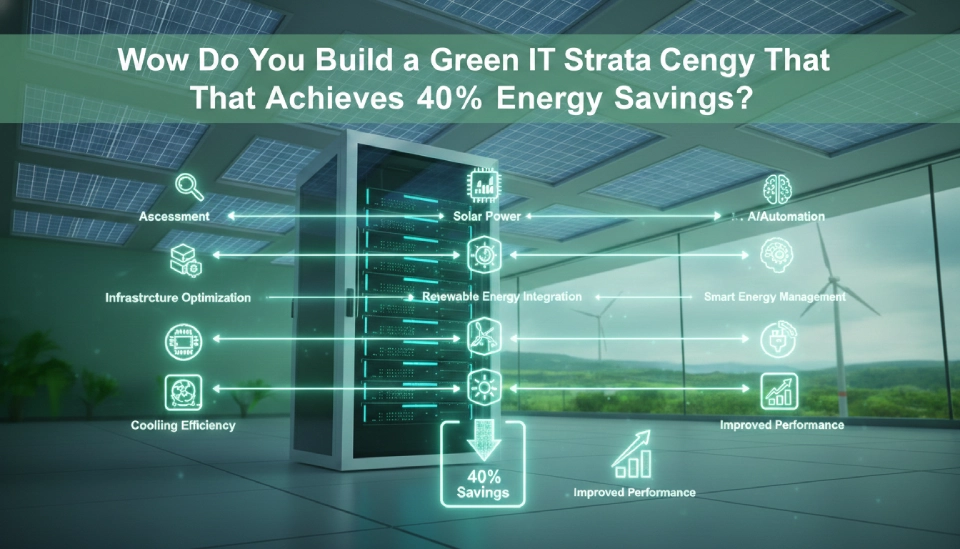

6. How Do You Build a Green IT Strategy That Achieves 40% Energy Savings?

The 40% reduction target is possible through compounding efficiencies in the areas of cooling, virtualization, workload management, and power infrastructure in a phased manner rather than in a single large effort.

No single strategy is effective in itself to achieve the 40% reduction. It is the combination of all strategies that makes the difference.

Phased approach with compounding efficiencies over time:

- Phase 1 (0-3 months): Conduct energy audit and establish current PUE baseline. Hot and cold aisle containment and higher server inlet temperatures. Low investment and quick payback.

- Phase 2 (3-6 months): DCIM software implementation for ongoing monitoring and measurement. Identify opportunities for server underutilization and begin the process of virtualization and consolidation.

- Phase 3 (6-12 months): Liquid cooling for high-density servers. Optimize power distribution units and replace UPS with high-efficiency units.

- Phase 4 (12-24 months): Renewable energy through PPAs. Integration of AI in cooling systems. Cloud migration for servers.

The US Department of Energy’s energy-efficient data center design guide confirms that airflow management and virtualisation offer the best ratio of impact to investment cost, making them the right starting points before committing to capital-intensive upgrades.

7. What Metrics Should You Track in a Green IT Strategy?

Monitor PUE as your key efficiency measure, supplemented by Carbon Usage Effectiveness, Water Usage Effectiveness, and Cost per Unit of Compute to link efficiency gains to business results.

A single measure provides limited visibility. A balanced scorecard provides a strategy.

The Four Metrics You Should Care About Most:

- PUE (Power Usage Effectiveness): The ratio of total data center facility energy to IT equipment energy. According to the Uptime Institute’s 2024 Global Data Center Survey, the global data center industry’s average is 1.56, where it has stagnated since 2020. The highest performers achieve 1.09. The difference is your efficiency potential.

- CUE (Carbon Usage Effectiveness): The ratio of data center carbon emissions to IT energy. It becomes critical as sustainability reporting becomes increasingly mandatory under regulation.

- WUE (Water Usage Effectiveness): The ratio of data center water consumed to IT energy. It is important for facilities with evaporative cooling systems or in regions where water is scarce.

- Cost per Unit of Compute: Linking efficiency gains to business results so you can justify investment to your CFO and other business leaders.

Data Center Knowledge’s 2025 sustainability strategy guide confirms that server consolidation and workload rightsizing consistently rank among the highest-value actions available, precisely because they improve multiple metrics simultaneously.

Key Takeaways

- A green IT plan targets cooling, virtualization, workloads, and renewable energy as a package. No single action alone has a 40% impact.

- McKinsey also confirms that cooling consumes about 40% of the total data center energy consumption.

- Cooling consumes the most and has the most impact, so it should be addressed first.

- Liquid cooling has a saving of more than 27% in energy consumption compared to air cooling, and a further 15.5% in energy usage efficiency has also been confirmed by Vertiv analysis.

- Servers in most enterprise environments operate at 10% to 15% utilization levels. Virtualization improves that to 40% to 60%.

- DCIM software provides a 10% to 15% efficiency improvement by coordinating the operation of the IT and facility infrastructure in real-time.

- Google’s data center has a PUE of 1.09, resulting in 84% less overhead cost than the industry average of 1.56, according to the Uptime Institute’s 2024 survey.

- Renewable energy via PPAs not only has a positive impact on the environment but also makes business sense.

- Phase your project: Start with the low-cost fixes of airflow and virtualization, followed by upgrades to the cooling infrastructure, DCIM software, and renewable energy contracts.